Why You Saw That Post (And Why It Matters)

BCM 206

We scroll without thinking.

A post appears, we react, maybe we believe it, and then we move on. But during this project, we started questioning something we had never really thought about before:

Why did this post appear in my feed in the first place?

That question became the foundation of our project, Context, Please. Because while misinformation is often treated as a problem of “fake content,” we realised it’s not just about what is posted it’s about what becomes visible.

This project is supported by a digital artifact that demonstrates how the campaign operates within a simulated social media environment.

Rethinking the Challenge

At the beginning of this project, we approached misinformation in a very expected way. Like most solutions, we focused on fact-checking and identifying what is true or false.

However, this approach quickly felt limited.

Even when misinformation is corrected, it continues to spread, gain engagement, and reappear in feeds. This led us to rethink the problem entirely

Instead of asking:

Is this true or false?

We began asking:

Why am I seeing this?

This shift was influenced by key theories. McLuhan (1994) argues that the medium shapes how information is understood. Castells (2011) explains that network structure visibility, determining what becomes prominent. Baudrillard (1994) suggests that repetition can make something feel real, regardless of its accuracy.

Together, these ideas highlight that in digital environments, visibility and engagement can become more powerful than truth.

From Idea to Campaign

Our early ideas focused on correcting misinformation, but this felt too similar to existing approaches. Through feedback and iteration, we shifted toward exposing the system behind misinformation instead.

This led to the creation of Context, Please a campaign that encourages users to question why content appears in their feed, rather than simply judging whether it is true or false.

The goal was not to fact-check, but to create awareness. Instead of giving answers, we designed content that would make users pause and reflect

Making the Campaign

We chose Instagram as our main platform because of how strongly the feed shapes user experience. Content is not shown randomly it is ranked based on engagement, interaction, and behaviour. As Zuboff (2024) explains, platforms are designed to maximise engagement, meaning visibility is driven by what keeps users interacting.

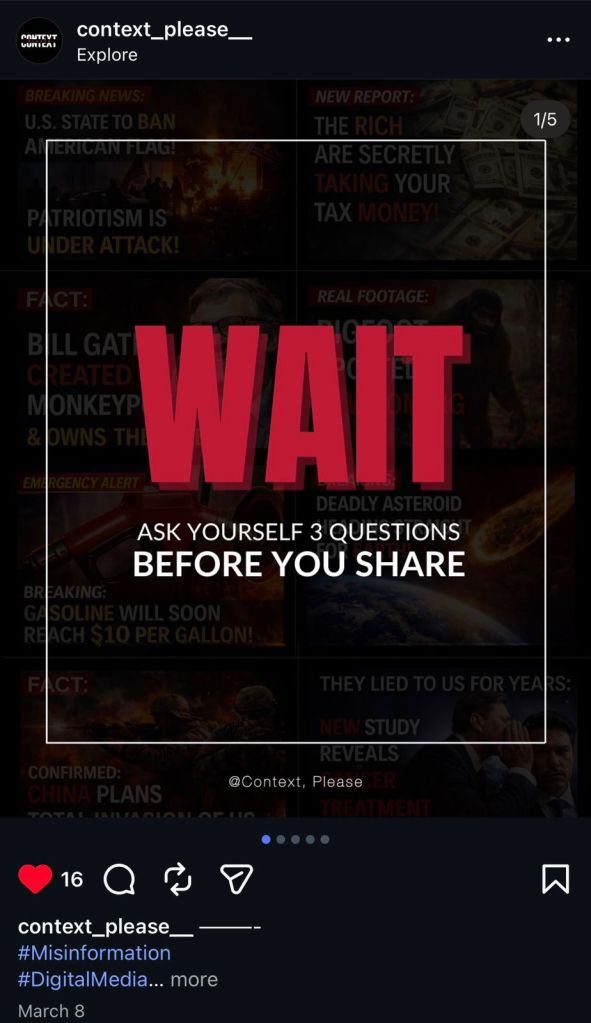

Rather than using a single format, the campaign combined interactive and reflective content.

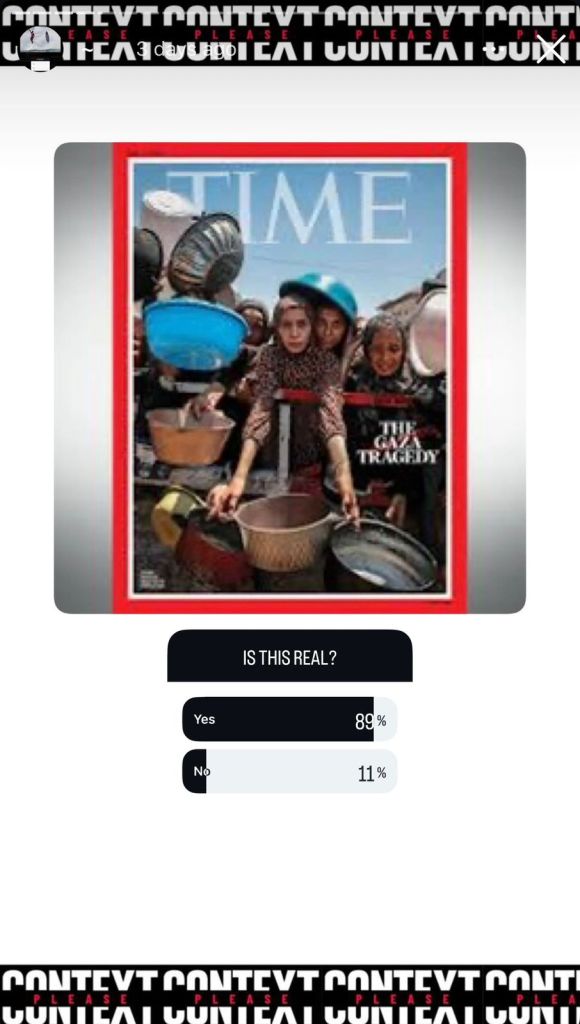

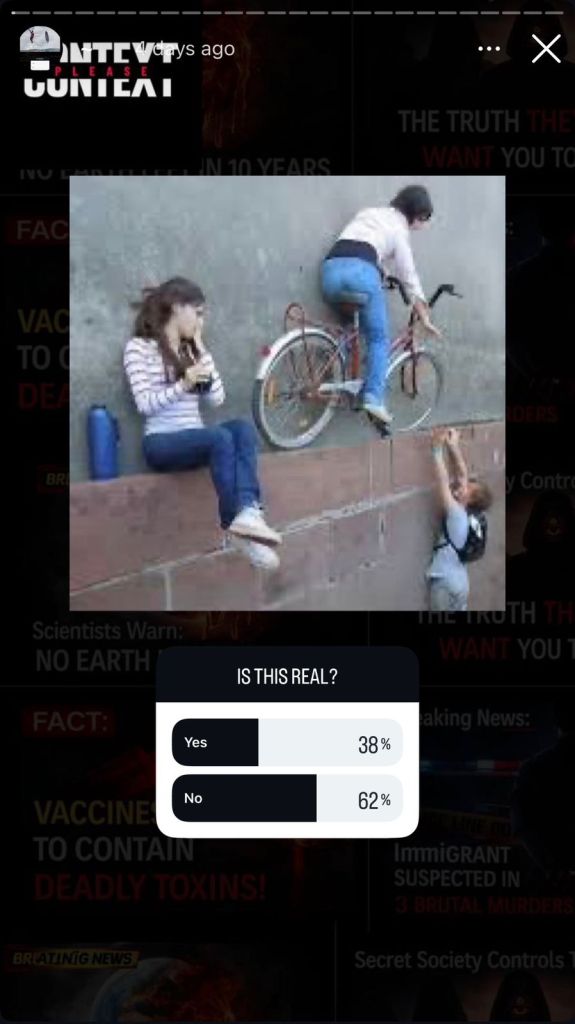

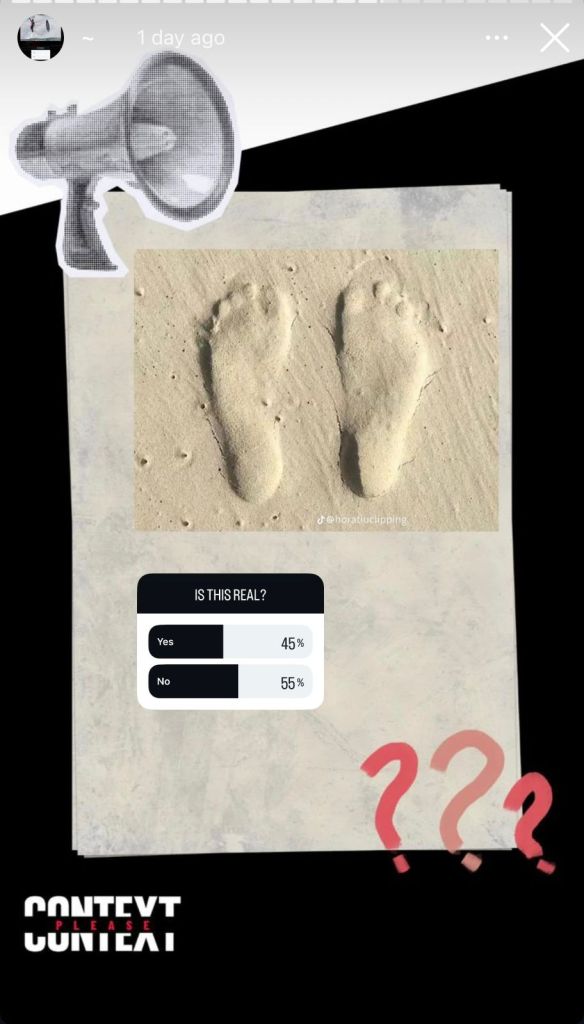

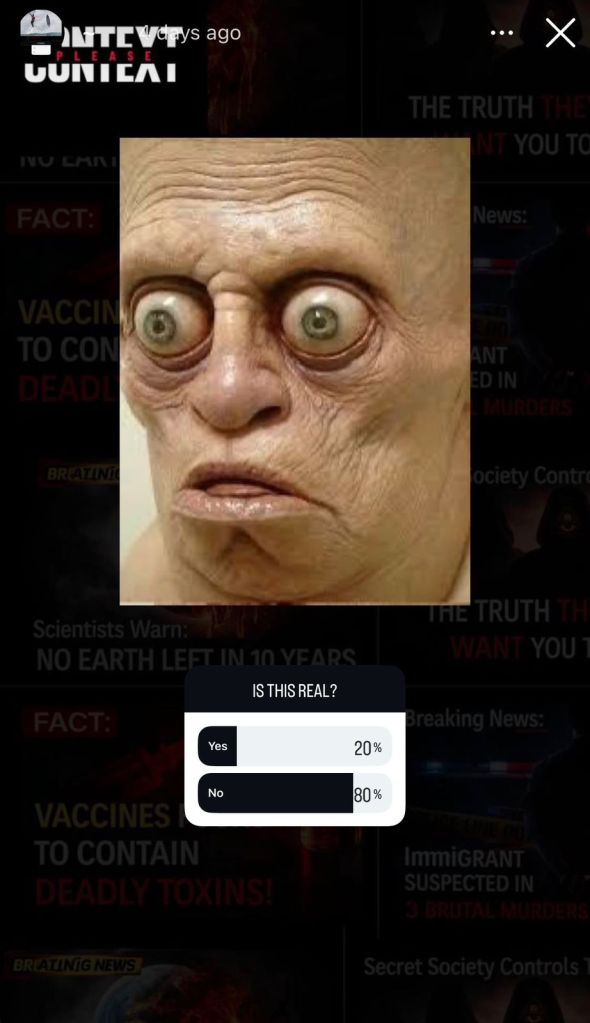

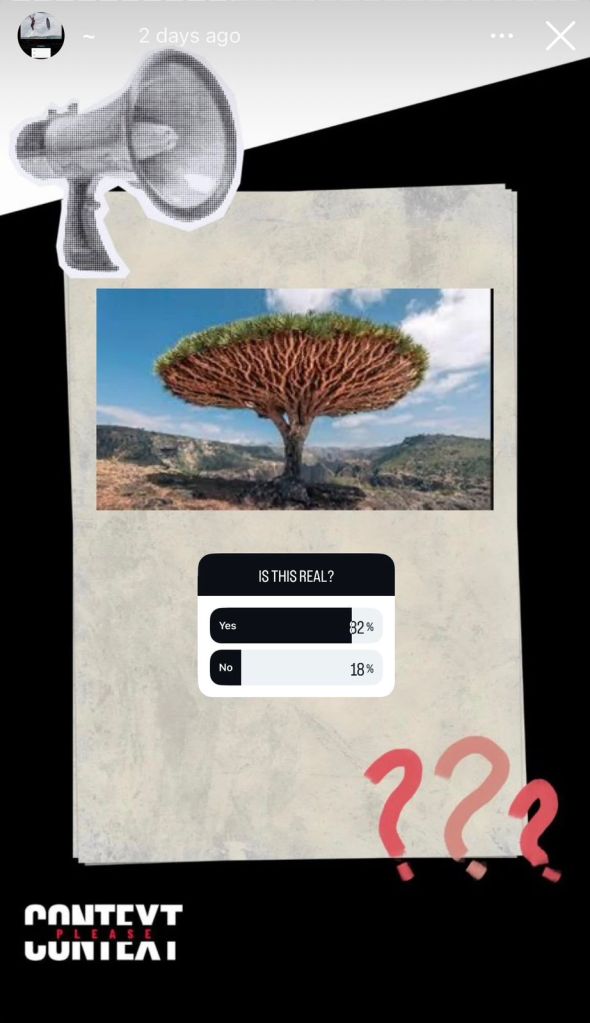

Through Instagram stories, users were shown viral-style images and asked a simple question:

Is this real?

The results were then shared revealing how often misinformation-style content was perceived as true.

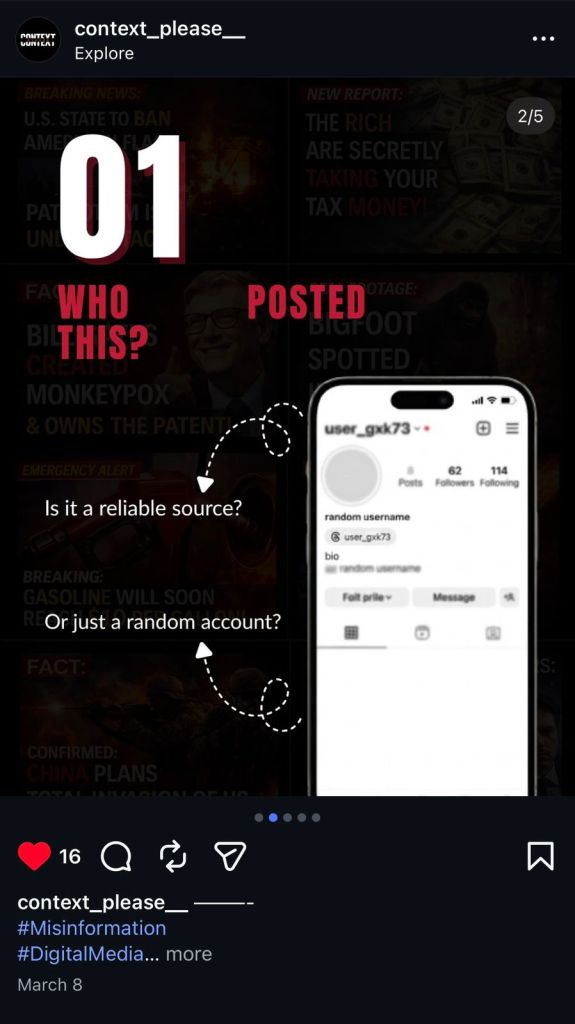

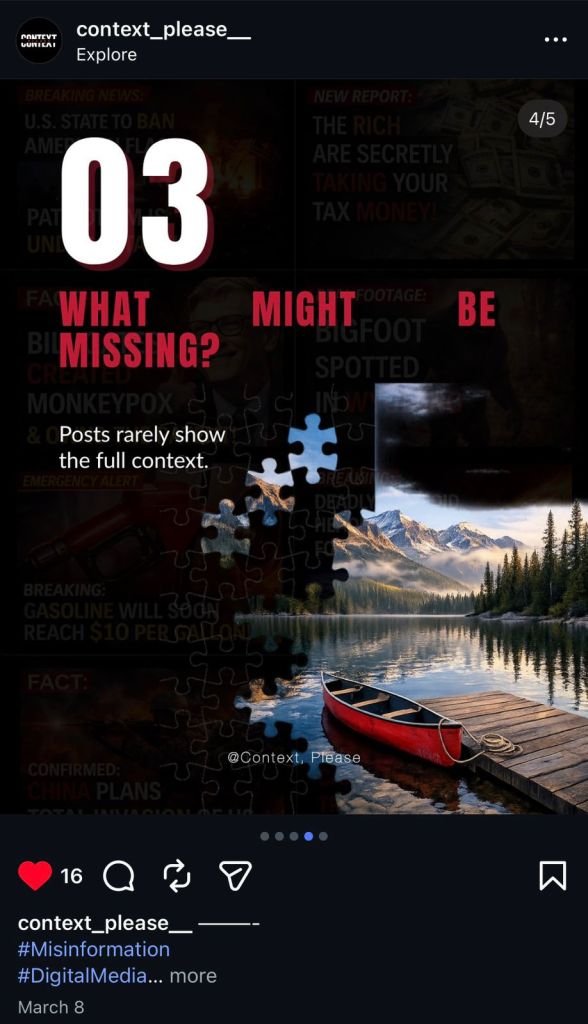

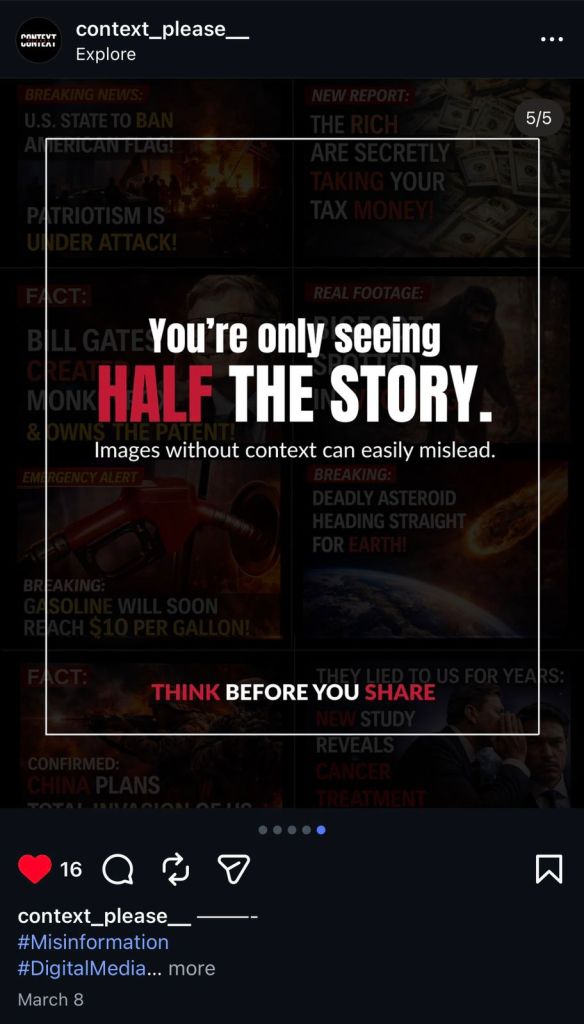

Alongside this we created posts designed to slow users down and encourage reflection. These included prompts such as: Who is this from? Where is it from? What might be missing? Other posts reinforced the message with phrases like “You are only seeing half the story” and “Fake news, real damage.”

The results were particularly revealing. Misinformation-style posts received significantly higher true responses than verified content. This reflects Vosoughi, S, Roy, D, and Aral, S, (2018), who found that false information spreads faster and reaches more people than truth online.

It also aligns with Pennycook, D., and Rand, DG (2019), who argue that people often believe misinformation because they do not critically evaluate what they see.

Reflection:

What Changed

This project significantly changed our understanding of misinformation.

At the start we saw it as a simple issue of false versus true information. However, through developing the campaign and analysing audience responses, we realised that misinformation is deeply connected to how platforms structure visibility.

The poll results showed that users were more likely to believe emotionally engaging posts, highlighting the role of presentation and repetition. This aligns with Del Vicario et al. (2016), who show that users tend to engage with information that reinforces their existing beliefs.

One of the biggest challenges we faced was the lack of algorithm transparency. While we could simulate how content spreads, we could not fully access or explain how Instagram’s algorithm operates. This reinforced the idea that digital systems are powerful partly because they remain invisible

Limitations

The project also had limitations.

As a prototype campaign, it reached a limited audience, meaning the results cannot be generalised.

Additionally, we were dependent on Instagram’s platform, which restricted our ability to fully control or analyse visibility systems. It was also difficult to measure long-term changes in user behaviour.

However, these limitations reflect the reality of working within digital platforms, where systems can be observed but not fully controlled.

Conclusion

Context, Please does not aim to remove misinformation.

Instead, it aims to disrupt how misinformation becomes believable.

By shifting attention from truth to visibility, the project highlights how platforms shape what users see, engage with, and believe.

In a digital environment where feeds are curated and ranked, understanding why we see something may be just as important as questioning whether it is true.

References

Baudrillard, J., 1994. Simulacra and simulation. University of Michigan press.

Castells, M., 2011. The rise of the network society. John wiley & sons.

McLuhan, M., 1994. Understanding media: The extensions of man. MIT press.

Del Vicario, M., Bessi, A., Zollo, F., Petroni, F., Scala, A., Caldarelli, G., Stanley, H.E. and Quattrociocchi, W., 2016. The spreading of misinformation online. Proceedings of the national academy of Sciences, 113(3), pp.554-559.

Pennycook, G. and Rand, D.G., 2019. Lazy, not biased: Susceptibility to partisan fake news is better explained by lack of reasoning than by motivated reasoning. Cognition, 188, pp.39-50.

Vosoughi, S., Roy, D. and Aral, S., 2018. The spread of true and false news online. science, 359(6380), pp.1146-1151.

Zuboff, S., 2024. The age of surveillance capitalism.